Last Monday we looked at Tango, a system for replicating a data structure to provide linearizable semantics and fault-tolerance. Tango is built up on CORFU, a replicated log, built over storage nodes. This paper notes article covers “CORFU: A distributed shared log” also by Balakrishnan et al. from TOCS December 2013. I believe that this paper elaborates on “CORFU: A Shared Log Design for Flash Clusters” by Balakrishnan et al from NSDI 2012 and that the NSDI paper is not a prerequisite for the TOCS paper.

What we already know?

From the Tango paper, we learned that CORFU provides a replicated log with support for the following operations: append, check, read, trim and fill.

Its main two components are: a sequencer, which hands out addresses in the log and storage nodes (such as SSDs) which store log entries. Storage nodes are divided into clusters and a variant of chain replication is used between them. Each cluster is responsible for a subset of log addresses.

Summary

This paper presents CORFU, a shared log, distributed over storage nodes. It main advantage over existing systems is its scalability as its not bottlenecked by the I/O of a single host.

Local addresses -> physical addresses

Each storage node exposes a infinite write-once logical address space with read, write, delete and fill operations for each address. Delete is used when the data at a particular logical address is not longer required, its physical address can be reused but its logical address cannot. Fill is used to mark that a logical address will not be used in the future. These are implemented using a hash table with various optimizations. A seal operations is also provided which locks a storage node to operates with equal or higher epoch number.

Global addresses -> local addresses

Each client resolves a global address into a set of nodes and a local address in two stages. Firstly, the client uses a local copy of a mapping from ranges in the global addresses space to disjoint subsets of hosts. For example, addresses 0-100K map to replica sets A-C and addresses 100K-200K map to replica sets D-F. A deterministic function (like mod and div) maps the specific global address to a local address a specific replica set like A or C.

Replication

Replica are written to using client-driven chain replication, this means the client writes to each replica in a deterministic order and waits for successful acknowledgment for each storage node before continuing. As a result, write latency scales linearly with the number of replicas. In contrast, majority-based protocol like Raft, Paxos and VRR can replicate a write in as little as 1 RTT, regardless of the number of nodes, in the right conditions. The downside of such protocols is that to tolerant f failure we need 2f+1 nodes instead of f+1.

If a client fails to complete this process then it may be filled in by the next client. Like Raft, this means that clients may be given false negatives, and thus the application utilizing the log must be able to handle this. For example, Raft uses operation id’s and caches to prevent multiple application of the same operation to a state machine in SMR.

A more important failure case is where a client fails to see a committed write since the replica it is querying was removed from the replica set due to network partition/failure and the client is not aware of this change. This is address by issuing leases to the storage units from the sequencer.

Changing projections

Corfu’s sequencer is in some ways analogous to the coordinator/master in tradition protocols. Likewise, Corfu’s changing projection has many parallels with VRR’s view changes or Raft’s term changes. In all cases, a monotonically increasing value (known as the epoch number/view number/term) separates different perspectives on system configuration. Example prospectives include a period of leadership in Raft or a set of projections in CORFU. Each node stores its prospective of this value and each message between nodes includes it. Projection change is initiated by a client, then agreed by storage nodes using a Paxos-like consensus protocol and then each (involved) storage node is sealed in the process. Clients learn of the projection change when they contact storage nodes (since it includes their outdated epoch) and they retrieve the new projection information from a networked storage drive.

My interpretation of changing projections is that each projection change can include a completely new configuration. This mechanism provides us with dynamic membership, in addition to a mechanism for dealing with network failures and partitions.

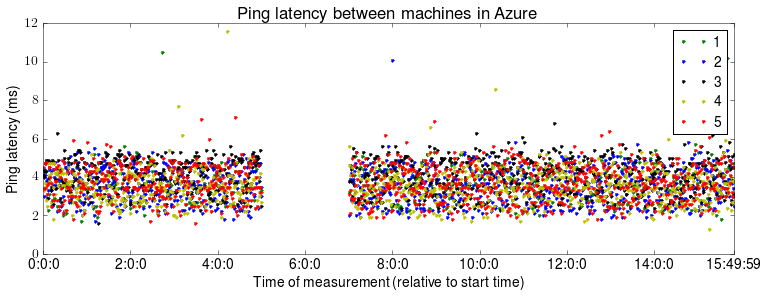

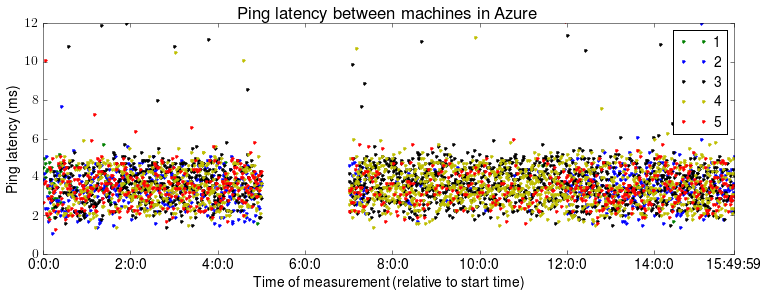

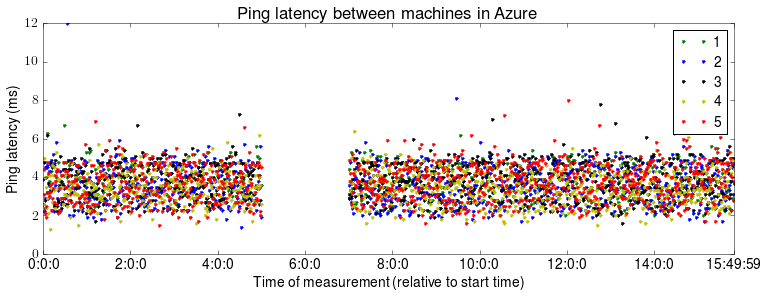

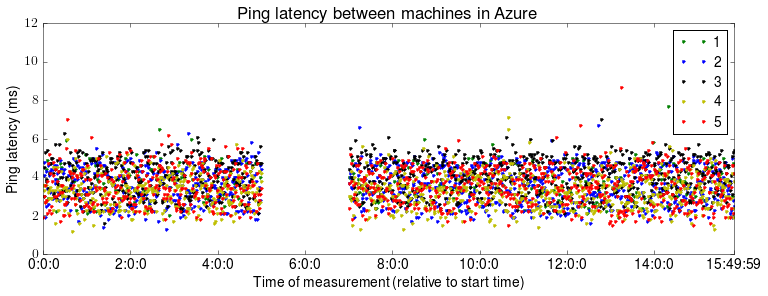

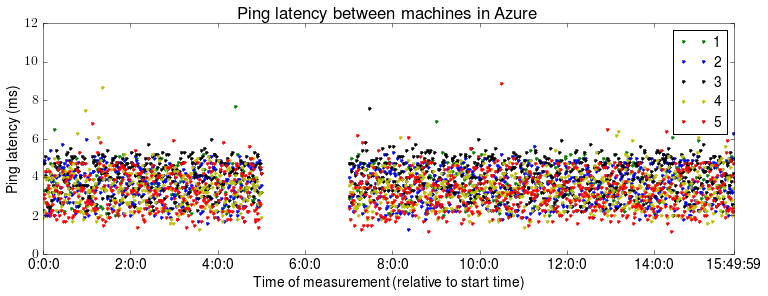

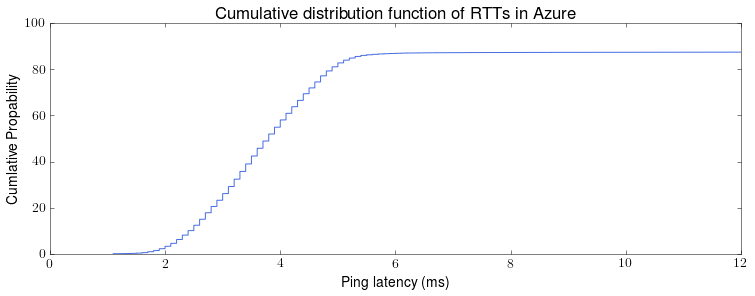

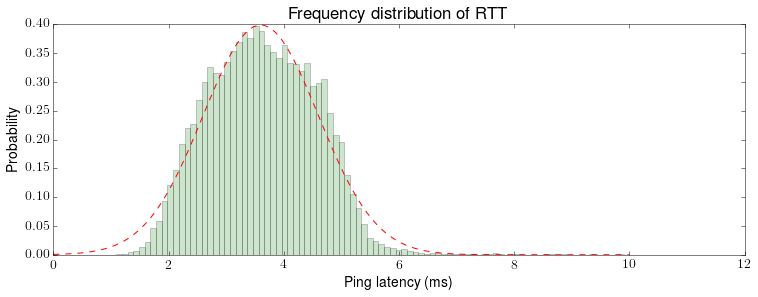

Evaluation

The authors’ experimental evaluation seems very promising. In some ways, it is difficult for me to determine which gains are from the hardware and which are from CORFU’s design. All of the experiments use two replicas per cluster, thus just two failures are capability of bringing the system down. The use of client-driven chain replication means that I would really like to see how the system scales (particularly its latency) with more replicas.

Conclusions

Corfu is a very interesting system and seems to be a novel point on the solution space of distributed log solutions. Next time, we will take a look at the open source implementation, CorfuDB.