The Network is Reliable is an excellent article which attempts to formalise the discussion on real world failures for distributed systems. There is currently great debate on whether the assumption that network partitions are rare is too strong or too weak, for modern networks. Much of the data which we could use to answer this question is not published, instead is takes the form of anecdotes shared between sysadmins over a beer. This article lets the reader sit at the table and share the stories.

It is worth noting that network partition is used here in the broadest possible sense. We are looking at end-to-end communications and anything which can hinder these. This goes beyond simple link and switch failures to distributed GC, bugs in the NIC, and slow IO.

Here 5 examples of the anecdotes covered:

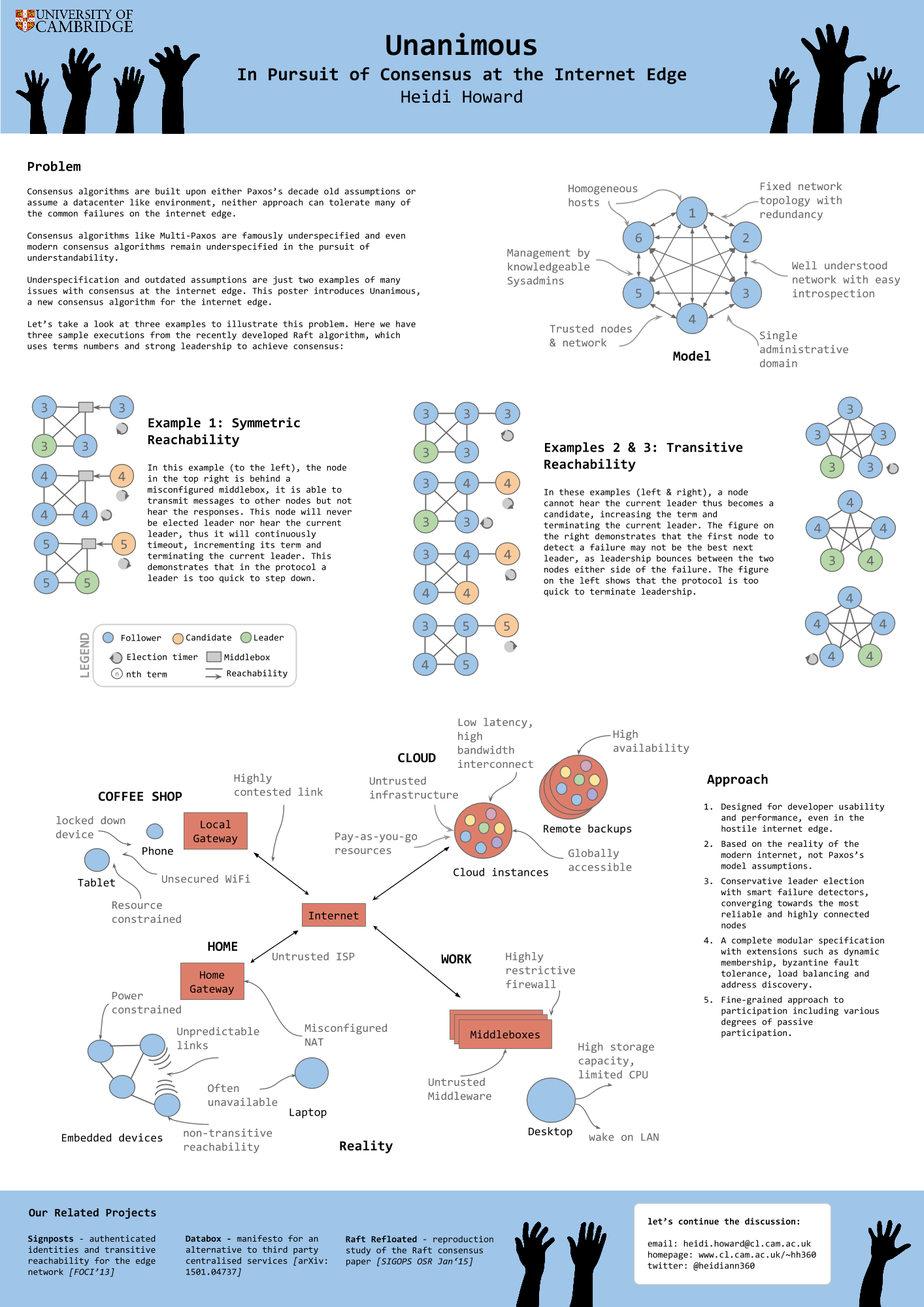

- split brain caused by non-transitive reachability on EC2

- redundancy doesn’t always prevent link failure

- asymmetric reachability due to bugs in the NIC

- GC and blocking for I/O can cause huge runtime latencies

- short transient failures become long term problem with existing algorithms

So, what does this mean for Unanimous and my research on reliable consensus for real world networks?

My original inspiration for working on consensus algorithms was the need for reliable consensus at the internet edge. With consensus comes fault tolerance, from this we can construct new systems for preserving privacy and handling personal data, offering users a viable alternative to 3rd party centralised services.

However, it has become apparent that the issues of reliable consensus is much more general. This article illustrates that even within the constrained setting of a datacenter, distributed systems are failing to tolerance the wide range of possible failures.