Below are my draft slides for next week’s talk at the 2nd Annual Oxbridge Women in Computer Science Conference in Oxford. The talk abstract is in a previous post. Any feedback would be greatly appreciated (note that the speaker notes are WIP)

Category Archives: Distributed Systems

Raft Refloated in the morning paper

I am incredibly excited to announce that Raft Refloated will be featured in The Morning Paper, by Adrian, as part of a series of 10 posts on distributed consensus. Last month, the morning paper also featured our work on the Databox.

Will the raft paper sink or swin? Let’s wait and see.

Paper Notes: Redirecting DNS for Ads and Profit

Redirecting DNS for Ads and Profit is one of the collection of papers from the ICSI team, with the results from the Netalyzr, network diagnosis tool. This paper focuses on the 66K session traces where DNS error traffic has been monetization and calls out Paxfire, for their role in this area, the paper focuses on NXDOMAIN wildcarding and search engine proxying (see my past post on how middleboxes interfere with DNS for an introduction to these techniques). The authors acknowledge the unrepresentative sample of Netalyzr users and the high number of sessions using OpenDNS or Comcast DNS resolvers.

NXDOMAIN wildcarding is not encouraged by ICANN and can have serious implications for non web browser DNS traffic (some resolvers only rewrite lookups starting with www. to try to prevent this). In many cases, redirection servers do not simply use HTTP 302.

The highlight of this paper was the fake NXDOMAIN opt-out offered by Paxfire, where the ad server simply served the user’s browser’s error page.

DNSSEC may provide authenticated denial of existence but this doesn’t necessarily fix the problem, for example Xerocole offers DNS resolvers with the option to simply rewrite DNSSEC signed NXDOMAIN responses without a signature, thus assuming the client will not validate DNSSEC.

OpenDNS wildcards NXDOMAIN and SERVFAIL errors as well as directing users to the redirection server if there server supports only IPv6. This is provided as an option in D-Link routers.

The study observed >12 ISPs using squid proxies to redirect search engine traffic. The study did not observe resolver independent NXDOMAIN redirection but did see NATs redirecting all DNS requests (regardless of resolver) to the configured recursive resolver, thus creating in-path NXDOMAIN rewriting if the new resolver uses NXDOMAIN wildcarding.

This paper is a fun, light read that I would recommend, though its results are a bit out of date now, as it used data from Jan 2010 to May 2011.

Talk & Poster @ 2nd Annual Oxbridge Women in Computer Science Conference

I’ve been accepted for a talk and a poster at the 2nd Annual Oxbridge Women in Computer Science Conference on 16th March 2015. My submitted abstract is here.

We’re coming to letter box near you

The January edition of the Operating System Review is now out, look out for “Raft Refloated: Do We Have Consensus?”. Also available without dead trees.

This course maybe of interest to readers, titled “Fog Networks and the Internet of Things”.

This course teaches the fundamentals of Fog Networking, the network architecture that uses one or a collaborative multitude of end-user clients or near-user edge devices to carry out storage, communication, computation, and control in a network. It also teaches the key results in the design of the Internet of Things, including consumer and industrial applications.

Link: https://www.coursera.org/course/fog

New abstraction for edge network systems

[this post is also available as a pdf]

The end-to-end principle of the internet is a fallacy. Modern distributed system rely on the cloud rather than deal with the complexity of the edge network. We propose to explore how to provide primitives such as consistency, integrity, accessibility and authentication in the context of edge network distributed systems.

Motivation

The internet has abandoned the end-to-end principles on which it was established [2]. With IPv4 addresses depleted and the transition to IPv6 yet to restore public identities, devices are left behind NATs and firewalls. Instead of dealing with the complexity of the edge network, users opt to use centralized cloud services, offering usability and high availability.

It’s a jungle on the edge network. By Original – Highways Agency [CC BY 2.0], via Wikimedia Commons

In response to this demand, developers are building new applications for the edge network. They are reimplementing solutions to establishing authenticated identities, consensus and availability in the face of mobile nodes, network partitions and asymmetric channels. Without a clear stack and layers of abstraction, systems fail to provide even the most basic safety guarantees. Protocols are layered on top of each other without formal agreement on the services provided at each layer. Even after this engineering effort by developers, systems still require intricate configuration to deal with the diversity of devices, middleboxes and network environments [6] on the edge network, if they are able to work at all.

Challenges

For this discussion we make the following distinction. Data requirements are needs specified by the application, for example a distributed file system may specify that file meta- data must be strongly consistent whilst the files themselves need only be eventually consistent. In contrast, environmental requirements is the set of network environments that the application needs to operate in. For example, an application might specify that the nodes may be mobile and intermittently connected, however there will always be a cloud node which is highly reliable and publicly addressable but run on untrusted infrastructure.

Our focus on the edge network means we lose the data center assumptions, typical in distributed systems for decades. The environmental requirements now spans:

We’re in the real world, no longer the datacenter. By Hugovanmeijeren (Own work) [GFDL or CC BY-SA 3.0], via Wikimedia Commons

- Heterogeneous network topologies — Middleboxes plague the edge network, network topologies are complex, devices may have asymmetric reachability, there is a wide range of link characteristics and traffic can be treated differently depending on its class.

- Mobile nodes — We can no longer rely on IP ad- dresses to identify nodes. Nodes may move between networks and have multiple network interfaces. Inter- mittent connectivity and network partitions are com- mon.

- Diverse hardware — Devices can vary in the con- straints of CPU, power supply or memory. Utilizing different networks may come at different costs.

- New failure models — We no longer assume homogeneous trust between nodes. Different nodes suffer with different failure models and expected failure patterns.

Developers make crude assumptions about their applications’ requirements. The data requirement space is large, it includes some regions that have been proved impossible and others which may prove impossible.

Research Questions

The key research question is how can we provide services such as consistency, accessibility and authentication in the context of edge network distributed systems, this encompasses other questions such as:

- Which areas of the space of data and environmental requirements are covered by existing distributed algorithms, which areas are not yet covered and which areas are provably impossible to cover?

- How can we formally express the assumptions and guarantees of distributed algorithms and their trade-offs, data and environmental requirements such that our engine can resolve them?

- How can we evaluate such systems given the diversity of possible environmental requirements and combina- tions of data requirements?

- How can we ensure that the distributed algorithms provide the stated guarantees under the assumptions? How can we construct and reason about these algorithms such that they provide stronger guarantees then conventional systems?

- How can we combine the above to provide a stack of protocols which fulfils the data requirements, given the environmental requirements?

Approach

We propose a new common abstraction between applications and networked devices to form personal clouds. Programmers (and ultimately users) formally specify the data and environmental requirements, these requirements span domains in fault tolerance, replication, consistency, caching, accessibility, security levels and confidentiality. From a col- lection of distributed algorithms, each with their own set of formally specified assumptions and guarantees, an engine will stack the protocols to provide the data requirements in the environmental requirements. From this foundation, we can build new distributed systems including new systems for personal data [4]. We are currently considering building upon a suite of existing tools in this domain including a unikernel operating system [10], TLS implementation [11], a git-style distributed data store [3] and Raft consensus implementation [5].

State of the Art

Sapphire [13] is a programming platform to separate application and deployment logic in cloud and mobile applications. Whilst Sapphire’s motivation is similar to ours, it covers a limited space of data requirements and environmental requirementss and doesn’t provide any guarantees to applications running on the platform.

The systems community is beginning to design distributed protocols specifically to tolerate the edge network, such as achieving consistency in an environment of heterogeneous trust [12, 7]. But quantifying the environmental requirements of such protocols requires a much richer abstraction than those currently used. Some authors [1, 9] suggest we can provide stronger guarantees for distributed protocols by changing the basic programming constructs and languages used, this is something we intend to explore further.

REFERENCES

[1] Peter Alvaro, Tyson Condie, Neil Conway, Joseph M. Hellerstein, and Russell Sears. I do declare: Consensus in a logic language. SIGOPS Oper. Syst. Rev., 43(4), January 2010.

[2] Marjory S. Blumenthal and David D. Clark. Rethinking the design of the Internet: the end-to-end arguments vs. the brave new world. ACM Transactions on Internet Technology, August 2001.

[3] Thomas Gazagnaire. Irminsule; a branch-consistent distributed library database. OCaml 2014 Workshop, 2014.

[4] Hamed Haddadi, Heidi Howard, Amir Chaudhry, Jon Crowcroft, Anil Madhavapeddy, and Richard Mortier. Personal data: Thinking inside the box. arXiv preprint arXiv:1501.04737, 2015.

[5] Heidi Howard, Malte Schwarzkopf, Anil Madhavapeddy, and Jon Crowcroft. Raft refloated: Do we have consensus? SIGOPS Oper. Syst. Rev., 49(1), January 2015.

[6] Christian Kreibich, Nicholas Weaver, Boris Nechaev, and Vern Paxson. Netalyzr: illuminating the edge network. In Proceedings of the 10th annual conference on Internet measurement, IMC ’10, pages 246–259. ACM, 2010.

[7] Jed Liu, Michael D. George, K. Vikram, Xin Qi, Lucas Waye, and Andrew C. Myers. Fabric: A platform for secure distributed computation and storage. In Proceedings of the ACM SIGOPS 22Nd Symposium on Operating Systems Principles, SOSP ’09, 2009.

[8] Ewa Luger, Stuart Moran, and Tom Rodden. Consent for all: Revealing the hidden complexity of terms and conditions. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, pages 2687–2696. ACM, 2013.

[9] Anil Madhavapeddy. Combining static model checking with dynamic enforcement using the statecall policy language. In Proceedings of the 11th International Conference on Formal Engineering Methods: Formal Methods and Software Engineering, pages 446–465. Springer-Verlag, 2009.

[10] Anil Madhavapeddy, Richard Mortier, Charalampos Rotsos, David Scott, Balraj Singh, Thomas Gazagnaire, Steven Smith, Steven Hand, and Jon Crowcroft. Unikernels: Library operating systems for the cloud. In Proceedings of the Eighteenth International Conference on Architectural Support for Programming Languages and Operating Systems, ASPLOS ’13, 2013.

[11] Hannes Mehnert and David Kaloper Mersinjak. Transport layer security purely in ocaml. In OCaml Workshop, 2012.

[12] Isaac C Sheff, Robbert van Renesse, and Andrew C Myers. Distributed protocols and heterogeneous trust: Technical report. arXiv preprint arXiv:1412.3136, 2014.

[13] Irene Zhang, Adriana Szekeres, Dana Van Aken, Isaac Ackerman, Steven D Gribble, Arvind Krishnamurthy, and Henry M Levy. Customizable and extensible deployment for mobile/cloud applications. In Proceedings of the 11th USENIX conference on Operating Systems Design and Implementation, pages 97–112. USENIX Association, 2014.

Distributed Protocols and Heterogeneous Trust – theory strong paper on adapting Byzantine-tolerant distributed protocols for hetrozenous trust relationships

Things are heating up at the edge network, Matrix.org is startup for a decentralised chat client with a clean JSON API, though it doesn’t seem to have much of a story for dealing with middleboxes.

Raft Refloated: Do We Have Consensus?

The January edition of SIGOPS Operating Systems Review is out now and thus is the aptly named “Raft Refloated: Do We Have Consensus?”. This is my first journal paper and I’m really existed to see what the community makes of it.

Title: Raft Refloated: Do We Have Consensus?

Authors: Heidi Howard, Malte Schwarzkopf, Anil Madhavapeddy and Jon Crowcroft

Paper: acm dl, open access link

Abstract: The Paxos algorithm is famously difficult to reason about and even more so to implement, despite having been synonymous with distributed consensus for over a decade. The recently proposed Raft protocol lays claim to being a new, understandable consensus algorithm, improving on Paxos without making compromises in performance or correctness.

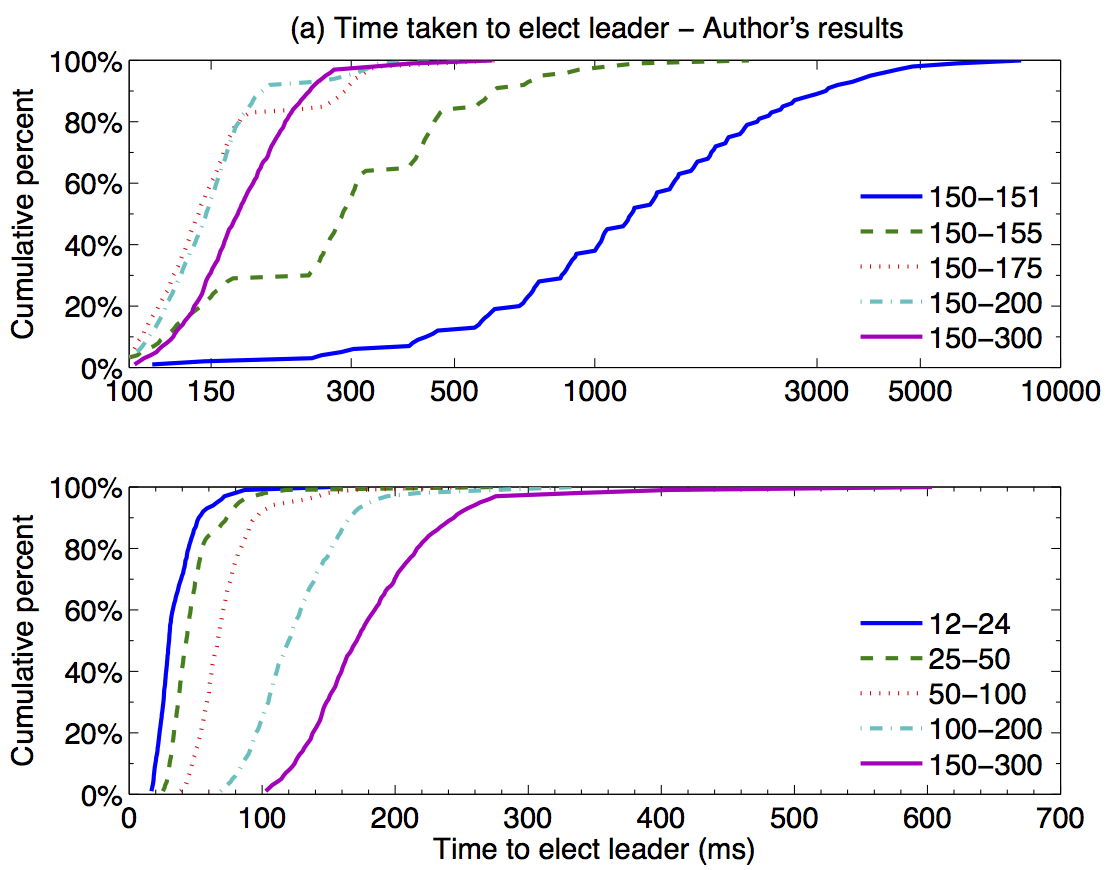

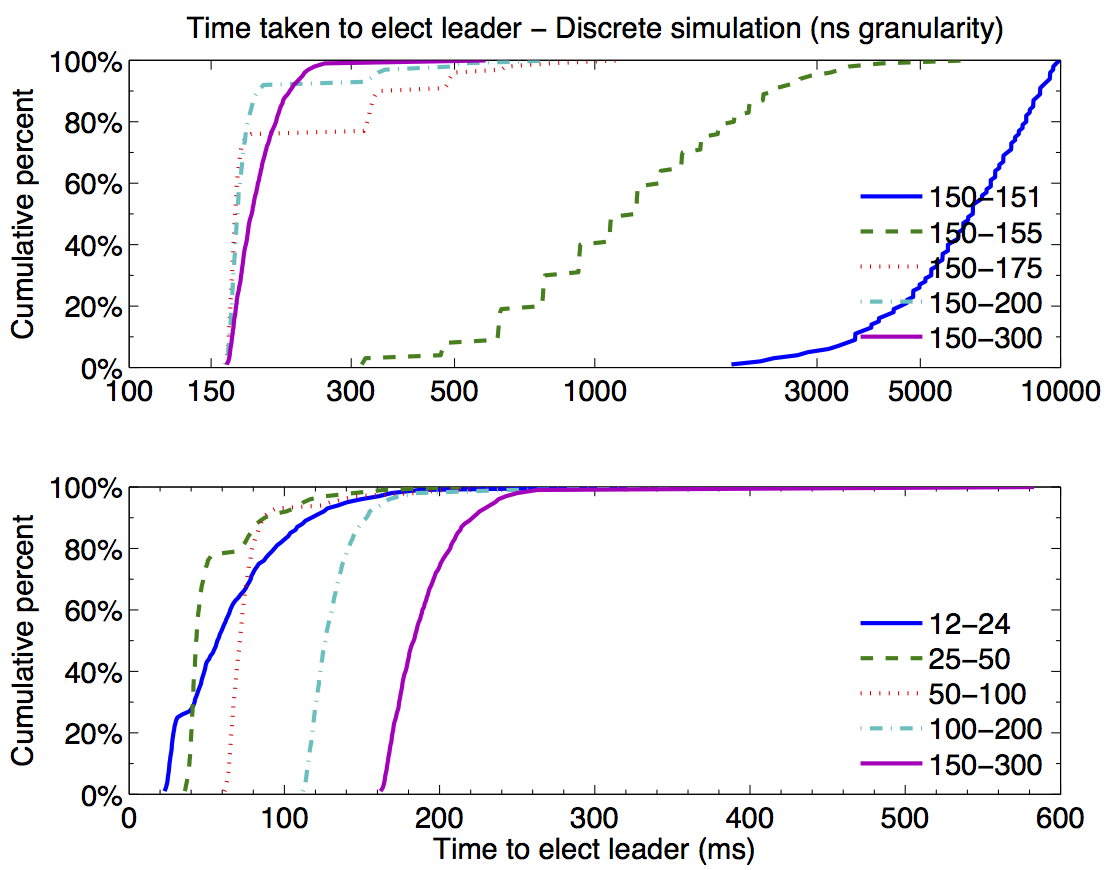

In this study, we repeat the Raft authors’ performance analysis. We developed a clean-slate implementation of the Raft protocol and built an event-driven simulation framework for prototyping it on experimental topologies. We propose several optimizations to the Raft protocol and demonstrate their effectiveness under contention. Finally, we empirically validate the correctness of the Raft protocol invariants and evaluate Raft’s understandability claims.

Below is the key figure of the paper, showing a side-by-side comparison of the simulation results next to the authors’ original results.